Log Management

Simple, Integrated Log Collection, Alerting & Archiving with WhatsUp Gold

Video

Log Management Overview

WhatsUp Gold Log Management provides easy visibility and management of device log data – all integrated into an industry-leading interface. You can monitor, filter, search and alert on logs for every device in your network while also watching for meta trends like log volume changes. You can also filter and archive logs to any storage locations for any retention period to comply with regulatory requirements and preserve historic data. The result is world-class network monitoring and powerful log management all in one easy-to-use solution.

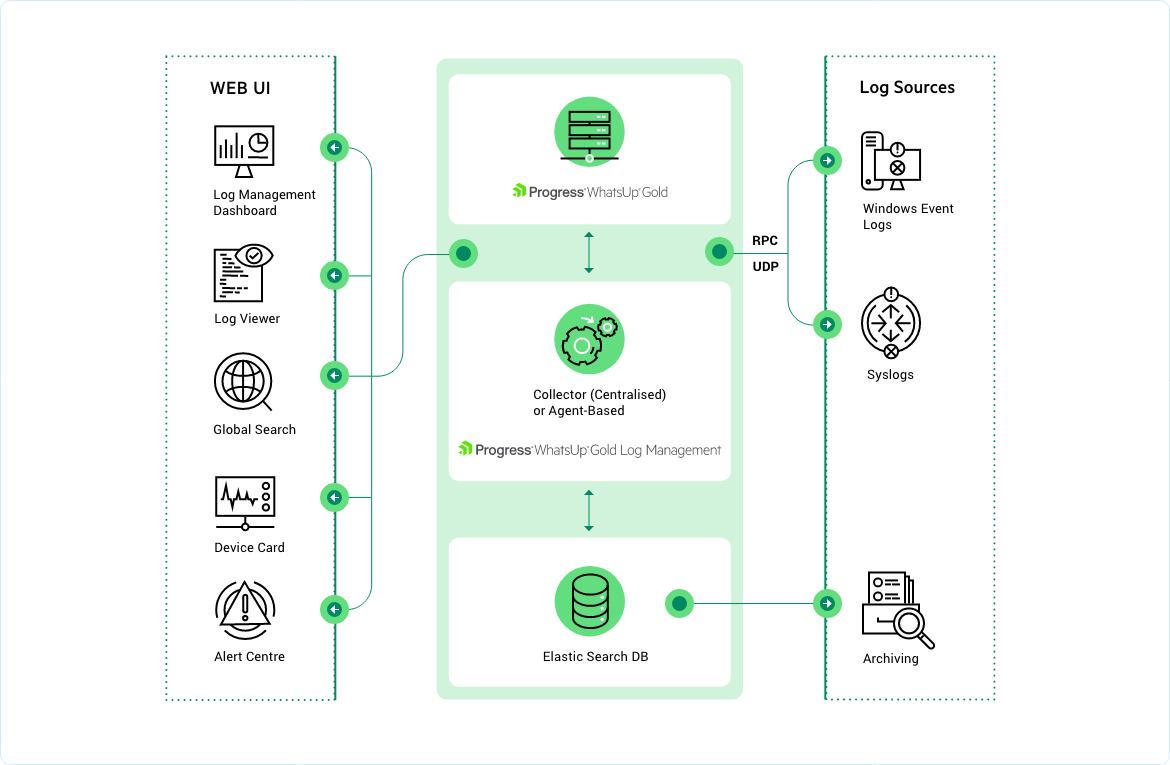

How the Log Management Add-on Works

Visualize and control your logs from WhatsUp Gold

Immediately manage logs via the same easy-to-use WhatsUp Gold interface, including mapping, customizable dashboards, alerting and reporting. Save time by diagnosing issues within the same interface as the rest of your network monitoring solution.

Trigger alerts on customizable conditions

Leverage WhatsUp Gold’s powerful and comprehensive alerting capabilities to get notified of specific log events or issues. Customize what events, conditions or trends generate alerts. Watch for meta trends like log volume changes and get notified at specified thresholds.

Log Management Custom Ingestion Filters

Rather than gathering every log from every source and filtering the data later, you can configure a custom filter in the Log Filter Library and apply it to the source, enabling WhatsUp Gold to gather only the log data in which you are interested.

Ingest and filter Windows Event Logs & Syslogs

Gather logs from every device in your network and use both pre-built and custom filters to narrow those results into the ones that matter. Reduce the firehose of log information to focus on the specific logs of interest or requiring tracking for compliance purposes.

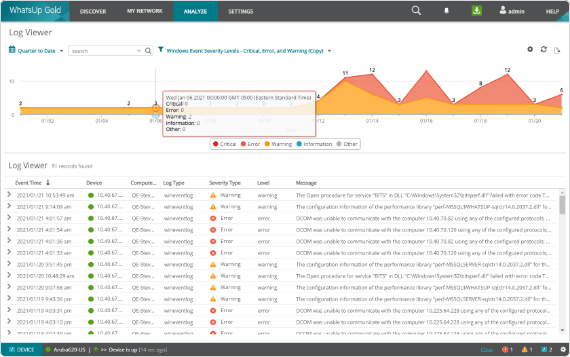

Configure log searches and export search results

Develop custom searches for specific parameters like machine name, log type, dates, log field values and more. Save those searches and export the results automatically or on-demand.

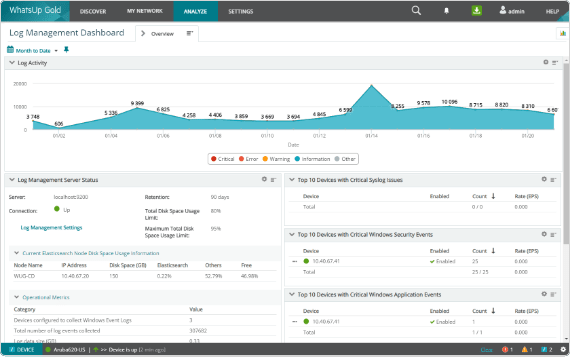

View log status and events on customizable dashboards

Show problematic events, log volumes, disk space used and other results in familiar WhatsUp Gold dashboard. Customize those dashboards to display essential log details either on their own or beside other network monitoring results for fast diagnoses.

WhatsUp Gold Licensing

Flexible licensing options to suit your organization's needs.

Delivers real-time visibility into device health, application performance, and traffic flows so IT teams can ensure uptime, optimize capacity and help prevent service disruptions. Helps reduce operational costs, accelerate troubleshooting and maintain a consistent user experience.

Business

starting from

Enterprise

starting from

Enterprise Plus

starting from

Premium

starting from

Total Plus

starting from

Get Started

Complete the form to download your free trial of WhatsUp Gold.

Learn more about log management

What are logs?

A log entry is like a "journal-of-record" for every event or transaction that takes place on a server, computer, or piece of hardware. Every system in your network generates some type of log file. Microsoft systems generate Windows Event Log files. UNIX-based servers and devices use the System Log (or Syslog) standard. Apache and IIS generate W3C/IIS log files.

What is log management? Why is it important?

Centralized log management is a key component of ensuring regulatory compliance. With it you can monitor, audit, and report on file access, unauthorized activity by users, policy changes, and other critical activities performed against files or folders containing proprietary or regulated personal data such as employee, patient or financial records. By leveraging log management functionality within WhatsUp Gold you can save time by troubleshooting log events or reviewing relevant logs within the same interface used for network monitoring, thus saving considerable time and effort.